Create a DAG for scheduling data pipeline on Zetaris Lightning UI

This page lists all the steps that needs to be followed in order to schedule a data pipeline or any other data object using a simple select query.

Prerequisites:

- The pipeline should be created on the environment for which we are using the API for.

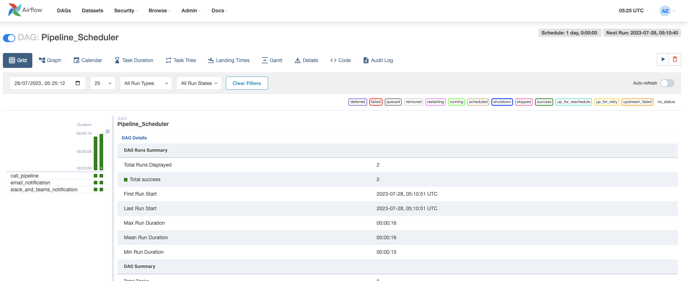

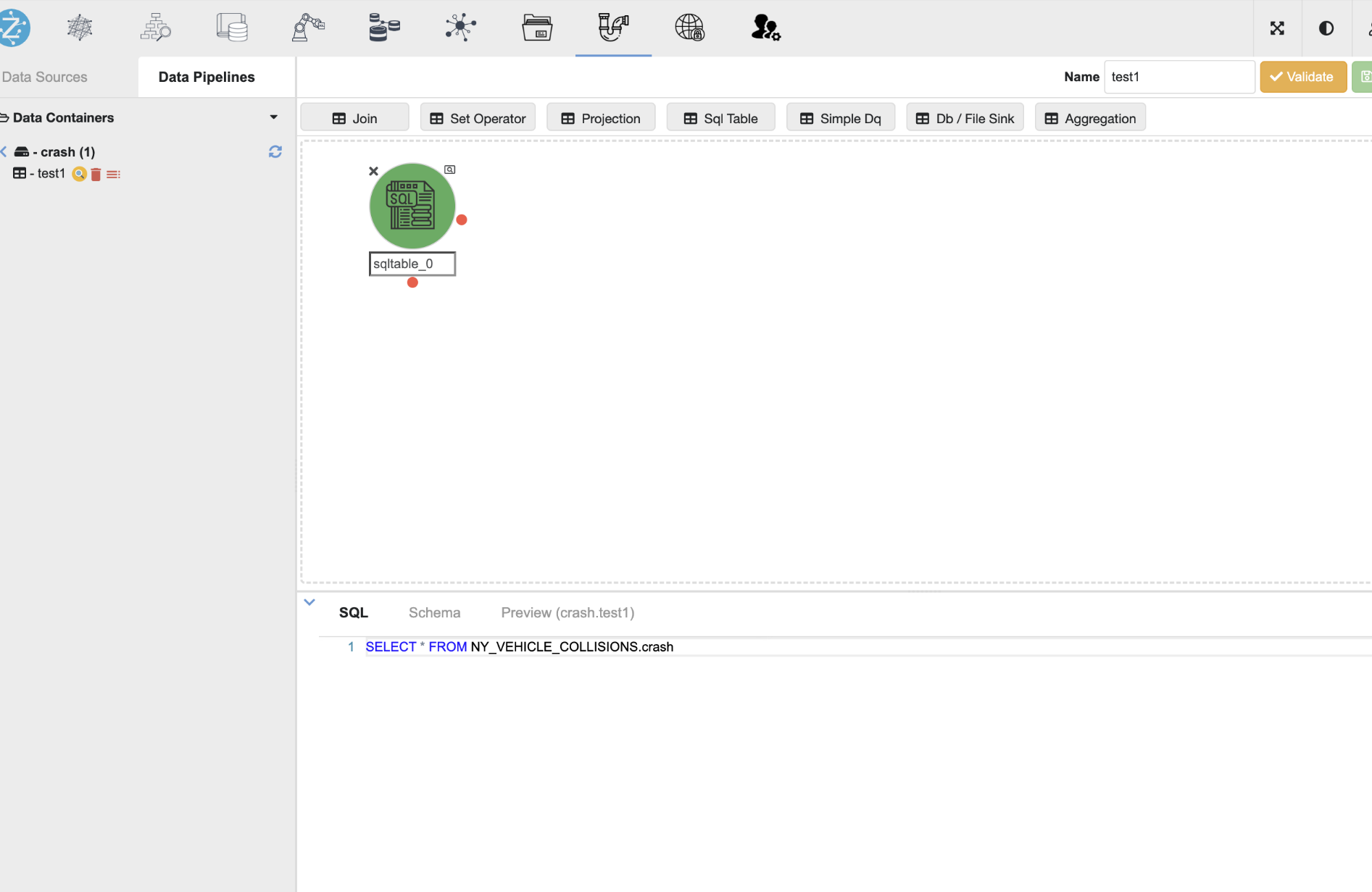

Image: Data Pipeline on Zetaris UI

Image: Data Pipeline on Zetaris UI - Before running the dags, make sure to have Microsoft Teams and Slack connections set up in Airflow for notifications. Refer to the Version Control In Zetaris documentation for the setup steps.

- We need to create variables on the Airflow UI to provide parameters for the pipeline scheduling process process. Follow these steps:

-

On the Airflow UI, navigate to the Admin section and select "Variables" from the dropdown list.

- Upload the Airflow Variables content as a JSON file by selecting "Choose File," and then import it by clicking "Import Variables" in Airflow.

-

Please note:

- By default, the installation of Airflow includes predefined variables related to Airflow configuration and the Zetaris Environment.

- The table provided below contain either example values or parameter names, which should be replaced with appropriate and specific values

Variable Name

Value

Email_Address

<email_address_for_notifications>

Select_Pipeline_Query

<select-query-pipeline>

- After setting up the airflow and defining the necessary variables, you are all set to execute the 'Pipeline_Scheduler' dag.